Welcome to our weekly FiftyOne tips and tricks blog where we recap interesting questions and answers that have recently popped up on Slack, GitHub, Stack Overflow, and Reddit.

As an open source community, the FiftyOne community is open to all. This means everyone is welcome to ask questions, and everyone is welcome to answer them. Continue reading to see the latest questions asked and answers provided!

Wait, what’s FiftyOne?

FiftyOne is an open source machine learning toolset that enables data science teams to improve the performance of their computer vision models by helping them curate high quality datasets, evaluate models, find mistakes, visualize embeddings, and get to production faster.

- If you like what you see on GitHub, give the project a star.

- Get started! We’ve made it easy to get up and running in a few minutes.

- Join the FiftyOne Slack community, we’re always happy to help.

Ok, let’s dive into this week’s tips and tricks!

Understanding the FiftyOne Brain’s compute_hardness function

Community Slack member Ananthu asked:

“How does the compute_hardness function work under the hood? An abstract explanation would be nice!”

Community member Joy gave an excellent response:

The compute_hardness function computes the entropy, or the amount of uncertainty, in the predicted class probabilities of a model’s output.

“A classification model predicts which class a certain data point belongs to. The “raw” output of the model is often in the form of “logits”, or log-odds. Each logit corresponds to a score for a specific class. The higher the score, the more likely the model thinks the data point belongs to that class. However, these logits are not in a very interpretable form. So, they are usually transformed into probabilities using a function like softmax. The softmax function takes a vector of logits and squashes them into a range of [0, 1] such that the entire vector sums to 1.0. This way, each element in the softmax output can be interpreted as the probability of the data point belonging to a specific class.

Now, entropy is a concept borrowed from information theory. In this context, it’s used to quantify the “uncertainty” or “surprise” of a probability distribution. A uniform distribution, where all outcomes are equally likely, has the highest entropy because it is the most uncertain or surprising – you have no idea which outcome is going to occur. Conversely, a distribution where one outcome is certain to happen has an entropy of zero, because there is no surprise or uncertainty.

So, when you calculate the entropy of the softmax output, you’re calculating the uncertainty in the model’s predictions. If the entropy is low, it means the model is very confident in its predictions. If the entropy is high, it means the model is less certain about its predictions.”

Learn more about the compute_hardness function and the FiftyOne Brain in the Docs.

Custom annotation color schemes in the FiftyOne App

Community Slack member ZKW asked:

“Is it possible for a detection/segmentation label with a different class to be visualized in different colors, in the same field instead of all in the same color? If possible, I’d also like to change the bbox color in the same way.”

Community member Joy provided the following explanation:

“In the latest version of FiftyOne you have a lot of control over the colors of your annotations. Check out the Color Scheme widget in the App toolbar.”

Learn more about color schemes and other new features in the latest FiftyOne 0.21.0 release.

Using Dynamic Groups with a video dataset

Community Slack member Amy asked:

“I would like to visualize a set of point clouds recorded in the same location over time. I have visualized the individual point clouds in FiftyOne, but is there a way to iterate through the dataset like a video clip?”

FiftyOne’s dynamic groups functionality supports this type of use case — you can group_by a scene_id, ordering by a frame number for instance. If you are including stereo images, this is similar to our quickstart-groups dataset. Here’s my sample code for loading this dataset and creating mock scene_id and frame variables:

import fiftyone as fo

import fiftyone.zoo as foz

dataset = foz.load_zoo_dataset('quickstart-groups')

scene_id = ['scene1','scene2','scene3','scene4']*50

frame = range(len(dataset))

dataset.set_values('scene_id',scene_id)

dataset.set_values('frame',frame)

session = fo.launch_app(dataset,auto=False)

After launching the App, you can use the Dynamic Groups tool to group by the scene_id, ordered by frame. There will be one group in the grid for each scene, and clicking enters a display that is paginated over the frames in that group.

Learn more about how to dynamically group samples in a collection by a specified field in the Docs.

Resolving FiftyOne MongoDB backend port conflicts

Community Slack member ZKW asked:

“Can anyone recommend a method for how to deal with a MongoDB port conflict when deploying 2 Docker containers on the same Linux machine? Both of them are running FiftyOne and both have this mount point:

-v /opt/.fiftyone/var/lib/mongo:/root/.fiftyone/var/lib/mongo”

Community member Joy provided the following explanation:

MongoDB explicitly prohibits running two Mongo instances on the same data directory. More info in this StackOverflow post.

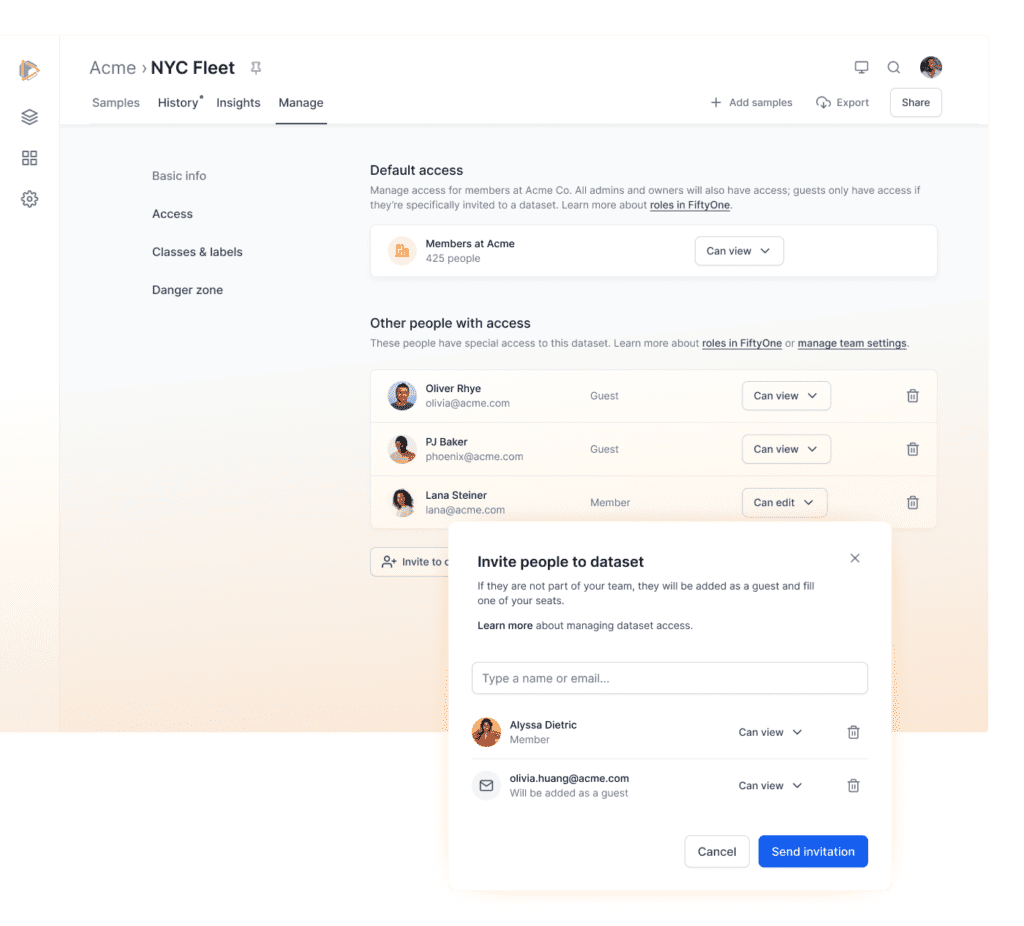

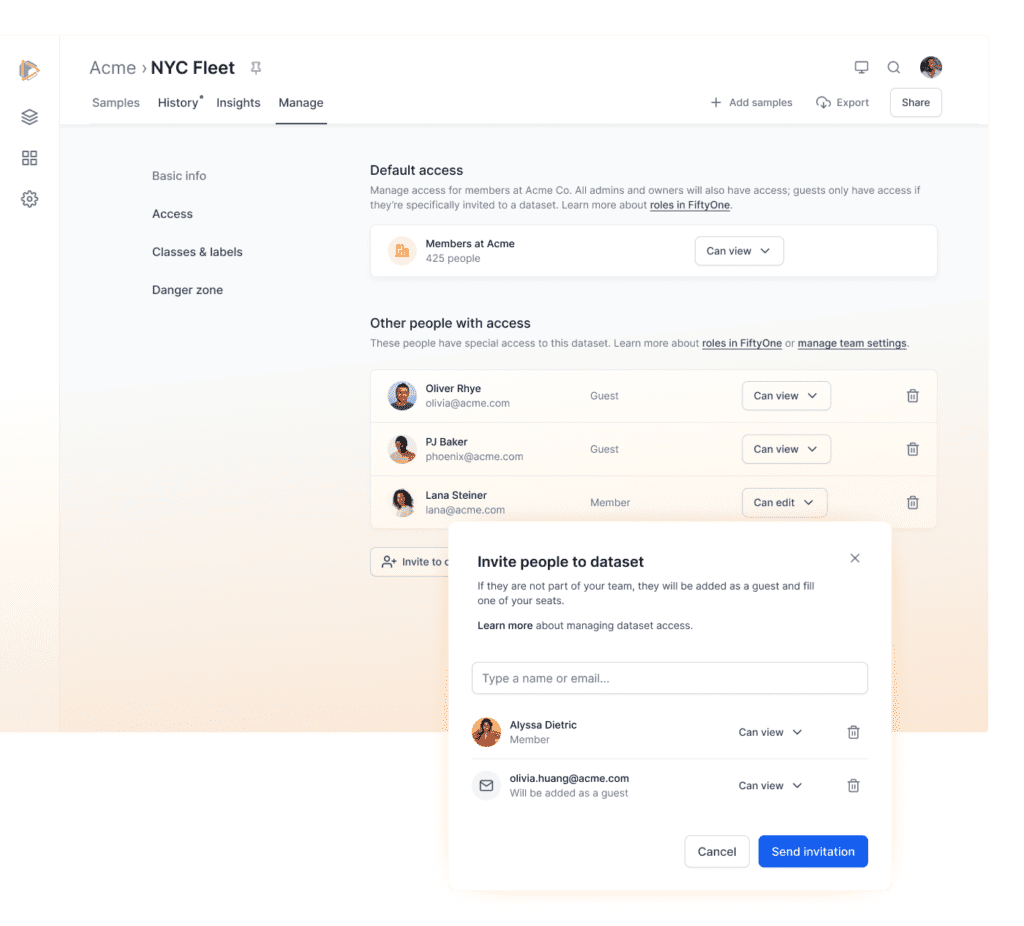

Support for multiple users in the FiftyOne App

Community Slack member Manoharan asked:

“I am running FiftyOne App, but I have a problem accessing the application with multiple users. Two users tried to open the same application and change the loaded dataset. The App automatically reloaded for the second person when a change is made by the first person. Could you suggest how multiple users can access the same FiftyOne deployment and dataset?”

The open source distribution of FiftyOne does not support multiple users because it was designed with the individual AI scientist in mind. It is a single-user, locally mounted data experience. If you need support for multiple users, cloud-based data, role-based security, and data versions, we recommend you check out FiftyOne Teams, which was created to meet the needs of AI teams collaborating on datasets and models.

Join the FiftyOne community!

Join the thousands of engineers and data scientists already using FiftyOne to solve some of the most challenging problems in computer vision today!

- 1,700+ FiftyOne Slack members

- 3,100+ stars on GitHub

- 4,400+ Meetup members

- Used by 300+ repositories

- 60+ contributors